News

IoD Ireland Survey Reveals Strong AI Adoption by Directors but Significant Gaps in Preparedness for New Laws

Learn more

This tool supports the development of an AI Acceptable Use Policy and a clear organisational position on the use of artificial intelligence. It is designed to result in a board-approved governance position rather than a static policy document.

The framework provides a structured approach for organisations to move from informal AI use to a formally governed and documented policy position. It covers all AI types, not just generative AI, and produces outputs suitable for board or governing body review.

For executive directors, it provides a framework to define the organisation’s governance approach to AI across four domains. For non-executive directors, the completed policy provides visibility, oversight and assurance regarding the governance of AI adoption.

Please note: The AI Use Policy Template (Word) and AI Use Implementation Guide are available to download at the bottom of this page.

The process works best with a small group, typically two or three people who collectively understand the business, the technology in use, and the regulatory environment. Allow 2-3 hours for the initial build, then a review cycle with the board before formal approval.

You need to know what AI is in use, both the tools your organisation deliberately invested in and the ones people adopted on their own. Start with any existing IT asset registers or procurement records, then run the Shadow AI Audit (Tool 1.1) to surface what you're not aware of. Together, these form your AI inventory, the foundation for everything that follows.

The downloadable template covers four domains: Acceptable Use Categories, Data and Confidentiality, Accountability and Oversight, and Transparency and Disclosure. For each domain, the template poses the key questions you need to answer and provides example positions ranging from conservative to progressive.

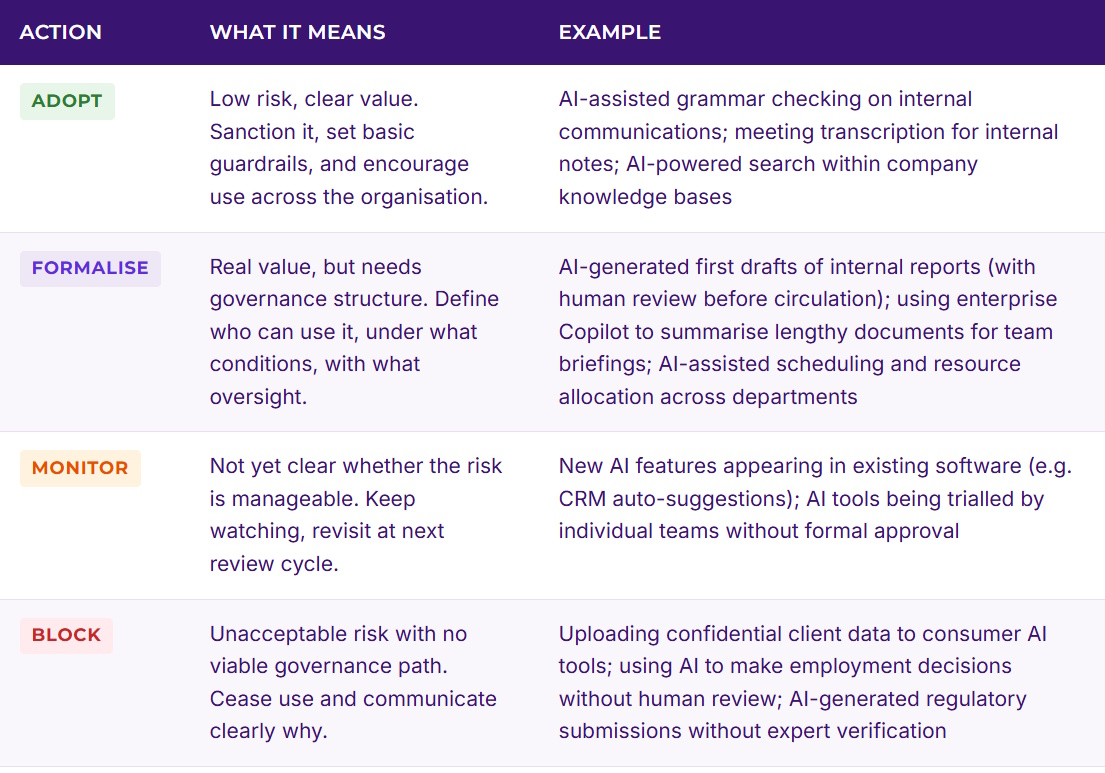

Using the audit findings and the classification framework below, assign every AI use case in your organisation to one of four actions: ADOPT (encouraged, low risk), FORMALISE (valuable but needs governance), MONITOR (borderline, keep watching), or BLOCK (unacceptable risk).

Every policy domain needs a named owner, not a committee, a person. Set a review date no more than six months out. AI is moving fast; annual policy reviews are not enough.

Route the draft to the board for formal approval. This is a governance decision, not an operational one. The board should own the policy position even if management drafted it. Once approved, communicate it to every person in the organisation, not just by email, but with a brief explanation of why it matters and what changes for them day to day.

Every AI use case in your organisation falls into one of these categories. The classification should be based on the data involved, the decision impact, and the regulatory context, not on how "advanced" the technology feels.

The downloadable template produces a structured policy document covering four domains. Each domain includes your position statements, the rationale behind them, and the practical rules that follow.

Which AI tools and use cases are permitted, which need approval, and which are off-limits. Covers both tools people bring in themselves and AI embedded in existing platforms. Includes the classification of every known AI use case using the ADOPT / FORMALISE / MONITOR / BLOCK framework.

What data can be used with which AI tools, under what conditions. Covers personal data, commercially sensitive information, client data, and intellectual property. Distinguishes between enterprise-grade AI services (with data processing agreements) and consumer tools (where data may be used for training).

Who is responsible when AI is involved in a decision or output. Establishes that the board owns the policy and management owns the execution. Covers the principle that a human is always accountable for AI-assisted work, defines escalation paths for edge cases, and establishes who reviews and approves AI use in high-stakes contexts. Clarifies the reporting line back to the board.

When and how to disclose that AI was involved. Covers internal disclosure (colleagues need to know), external disclosure (clients and stakeholders), and regulatory disclosure (where the EU AI Act or sector rules require it). Avoids over-disclosure that creates unnecessary concern.

The template also includes Schedule A: Current AI Classification Register - a quick-reference table listing every AI tool in use, its classification (ADOPT / FORMALISE / MONITOR / BLOCK), and the key conditions attached. This means staff can read the policy and immediately see what's approved, what needs governance sign-off, and what's off-limits, without opening a separate spreadsheet. The schedule carries a snapshot; the full inventory (Tool 1.1) remains the master record.

A completed policy should run 5-9 pages depending on your organisation's complexity. Written in plain language, not legalese. Every section has a named owner. Every AI use case is classified. The document is dated, has a review date no more than six months out, and is structured for board approval with a clear record that directors have considered and endorsed the organisation's AI position.

Disclaimer

The AI Governance Toolkit is provided by the Institute of Directors (IoD) Ireland for general informational purposes only. It is intended as a practical guide to support members. The toolkit does not constitute legal, regulatory or professional advice. IoD Ireland accepts no liability for any loss, damage or consequence arising from the use of, or reliance on, this material.